Adventures in Cookie Consent & Rogue Cookie Validation

Foreword

For deep analytics practitioners in the new era of privacy rules, the question is not whether we want to be extremely respectful and responsive to user tracking preferences. Instead, the question is how do we put in place a set of checks that will ensure we are being respectful without the rest of our work grinding to a complete halt.

Anyone who has rolled out popular cookie consent solutions such as OneTrust, or been tasked with monitoring a wide range of properties for rogue cookies can attest vocally that the challenges are often daunting. As an added twist, most QA automation tools that are supposed to come to our aid in this mission are still trailing behind. Their main deficiency is that they don't make it easy to interact with the page, and this is crucial for any kind of user consent emulation.

Without further ado, we want to cover some use cases relating to cookie validation that are becoming increasingly common. Our main goal is to show that it is possible to efficiently automate even complex user journeys that expose the effects of different levels of consent on tracking. As a bonus, we will also show that in the same fell swoop, you can monitor for rogue cookies and the rogue trackers that may be setting them.

The Use Case

Let's say we recently implemented OneTrust on a key web property. We finally got it working, and now we realize that validating the actual effects of different consent levels is going to be an ongoing battle. It is absolutely crucial to our business to respect user privacy preferences. This includes not just ensuring that OneTrust consent levels are respected throughout, but also that only fully blessed martech vendors are doing any kind of cookie-setting on our website.

Solution Outline

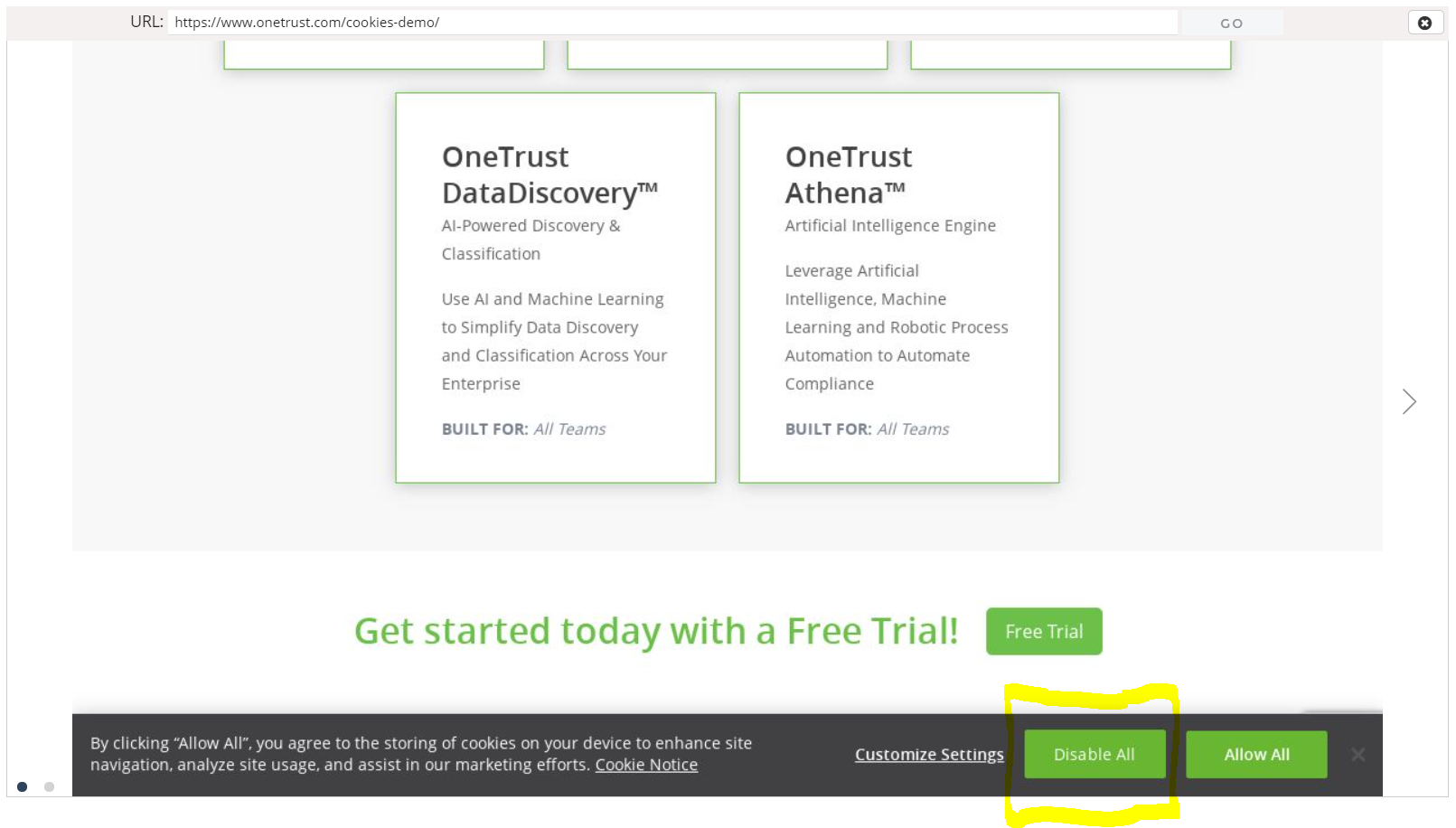

We begin by designing a quick scenario in which a user lands on the homepage of the site and declines to be tracked:

Our automation framework picks up the click on "Disable All" and stores it in a way that can be replayed many times without a human behind the wheel.

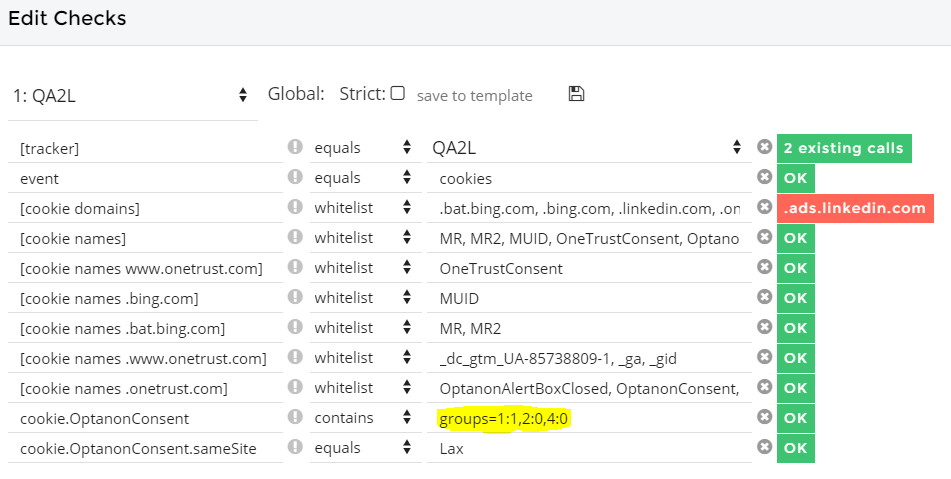

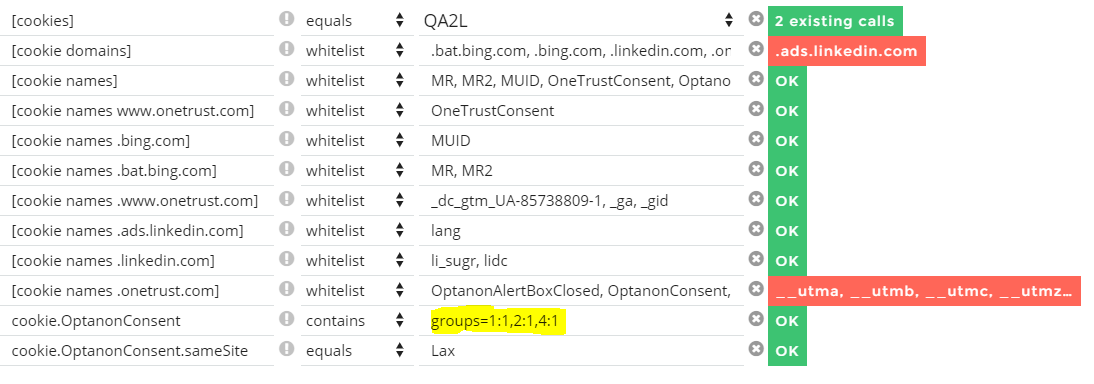

But that's skipping ahead. The next step, we inspect the cookies post-click and ensure that our OneTrust implementation responded correctly to the user's stated preferences:

Readers who are familiar with how OneTrust works already know that what we're looking for is a set of groups in the OptanonConsent cookie. The value of this cookie is exposed by our QA automation solution and we can define a check that will ensure this value corresponds correctly to the user's choice.

In the same breath, we have an opportunity to inspect other cookies on the page at this time, and define a set of allowable domains or cookie names. If a rogue domain or cookie appears in any subsequent test runs, we will be immediately alerted to its presence.

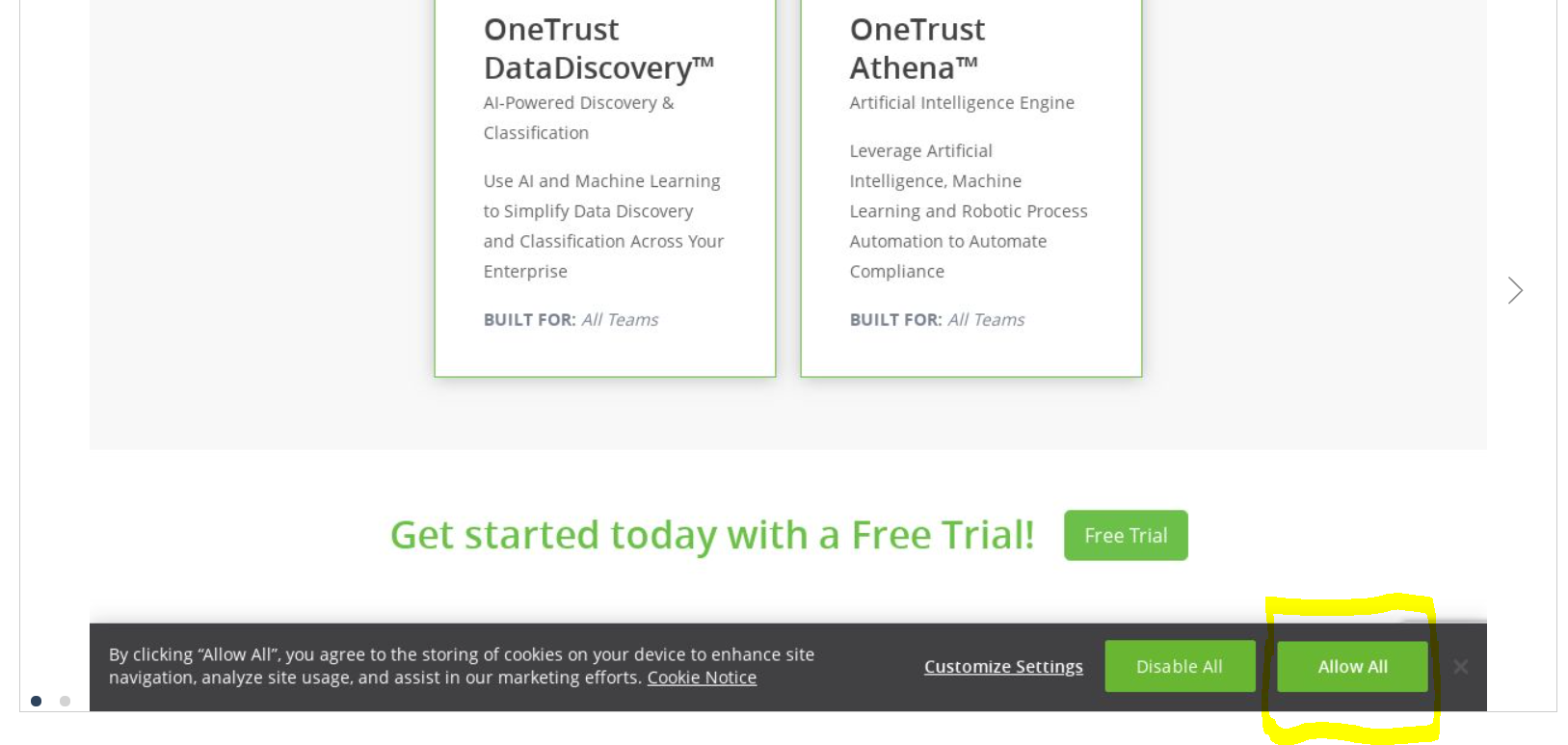

Next, we create an alternative flow in which the user has opted to "Allow All" in the OneTrust consent footer:

We add a similar check for consent groups, which is previewed immediately and rubber-stamped in green by our automation solution.

This time around, we also decide to apply a check on the cookies being set by OneTrust itself. We find out quickly that they are setting GA campaign cookies. In this particular case, we're using OneTrust's demo site, so this is okay. But obviously you may want to lock things down a lot more when OneTrust is running on your own website:

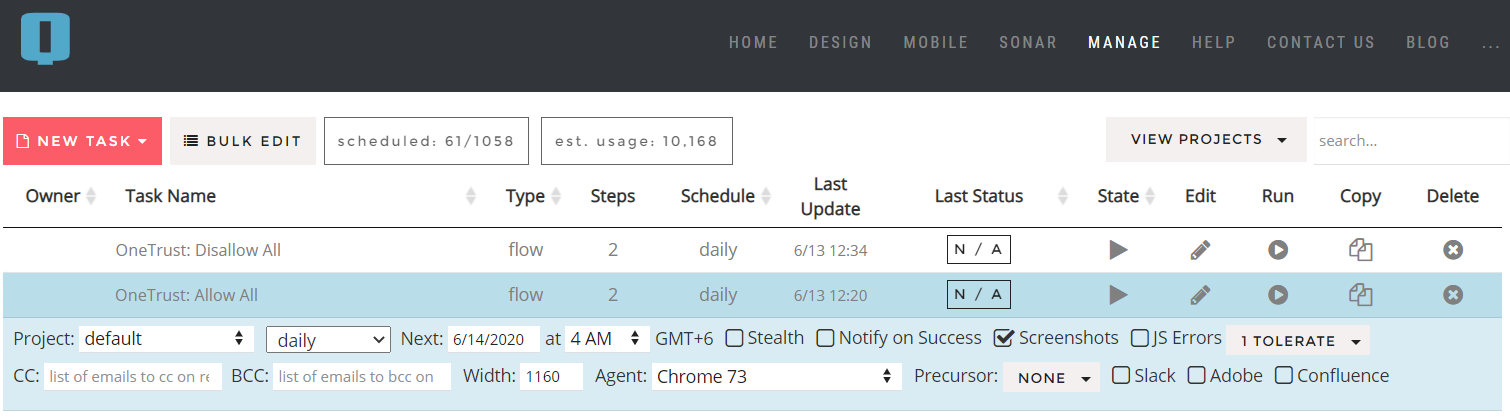

We now have two tasks in our scheduling interface, one for each basic choice a user may make:

We can run these tasks ad hoc whenever we know impactful changes to the site have taken place. But to be extra safe, we can also enable these to run daily and alert us on any disruptions in OneTrust functionality, as well as any unsanctioned cookies that may rear their heads while we were not looking.

Taking Things Further

With the help of our capable QA automation platform, we've quickly arrived at a baseline for our consent implementation. And we also added some monitoring for rogue cookies in the process. But we're not done yet.

For one, the effects of the OneTrust consent groups setting have to be picked up properly by our tag manager (such as e. g. Google Tag Manager or Adobe Launch) and applied correctly to a potentially large number of vendor tags.

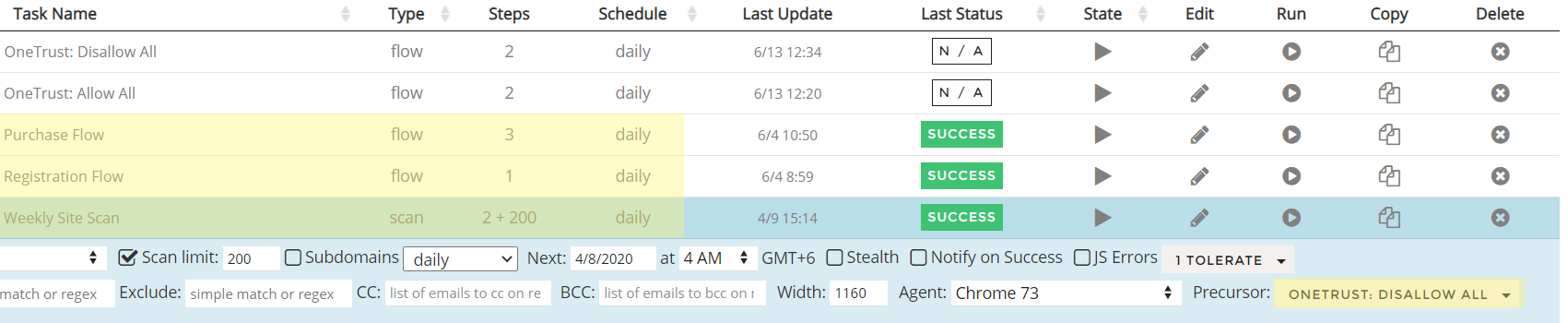

Luckily for us, we already have automated test coverage for all of our key user journeys, and we have some site-wide scanning at the page level.

All we have to do next is apply the new OneTrust selection tasks as "precursors" to some of our existing tasks, and optionally modify the checks to reflect what tags should and should not fire in each case:

In Conclusion

Obviously, we left out a lot towards the end of this blog post. There's definitely room for a much more in-depth look at how to validate OneTrust's multiple consent levels and their effects on deep interactions and the KPI tracking they fire.

But what we wanted to show today is that the first steps toward comprehensive privacy cookie validation don't have to be hard.

Tags: Data Governance Product News