Auto-Detect Bot Traffic in Google Analytics with GTM

by Nikolay Gradinarov

Introduction

It is easy for spider/robotic traffic to infiltrate your Google Analytics data and wreak havoc on your KPIs. Correctly identifying robotic traffic on the other hand is a difficult undertaking.

Google Analytics is of course capable of identifying and filtering some bot traffic with the built-in "Exclude all hits from known bots and spiders" switch you can flip individually at the view level. This option, however, relies on the IAB lists of known bot user agents and the reality is that the user agent string is easy to manipulate. As they gain sophistication in a continuous arms race, a majority of modern bots and spiders will easily bypass these kinds of filters.

At the same time, for most organizations it becomes increasingly important to separate out human traffic as much as possible. But custom approaches to additional bot filtering relying on IP and IP-based lookup data have been hampered in recent months with Google Analytics disabling some key dimensions (Network Domain, Service Provider), removing ISP Organization and ISP Domain from filtering options, and the overall shift towards complete IP anomymization.

While some of the heretofore standard methods for detecting bots are no longer easy to apply, there are still some weapons left in the analyst's arsenal. In this blog post we'll discuss an off-the-beaten-path approach that relies on two dimensions: screen resolution (the width and height of the screen in pixels) vs. browser viewport (the width and height of the browser window).

Solution Overview

The premise is simple. When an actual human visitor views a website, their browser window height and width are expected to be smaller than or equal to the dimensions of their screen. If the browser's dimensions exceed the size of the screen, it's a signal that something fishy is afoot.

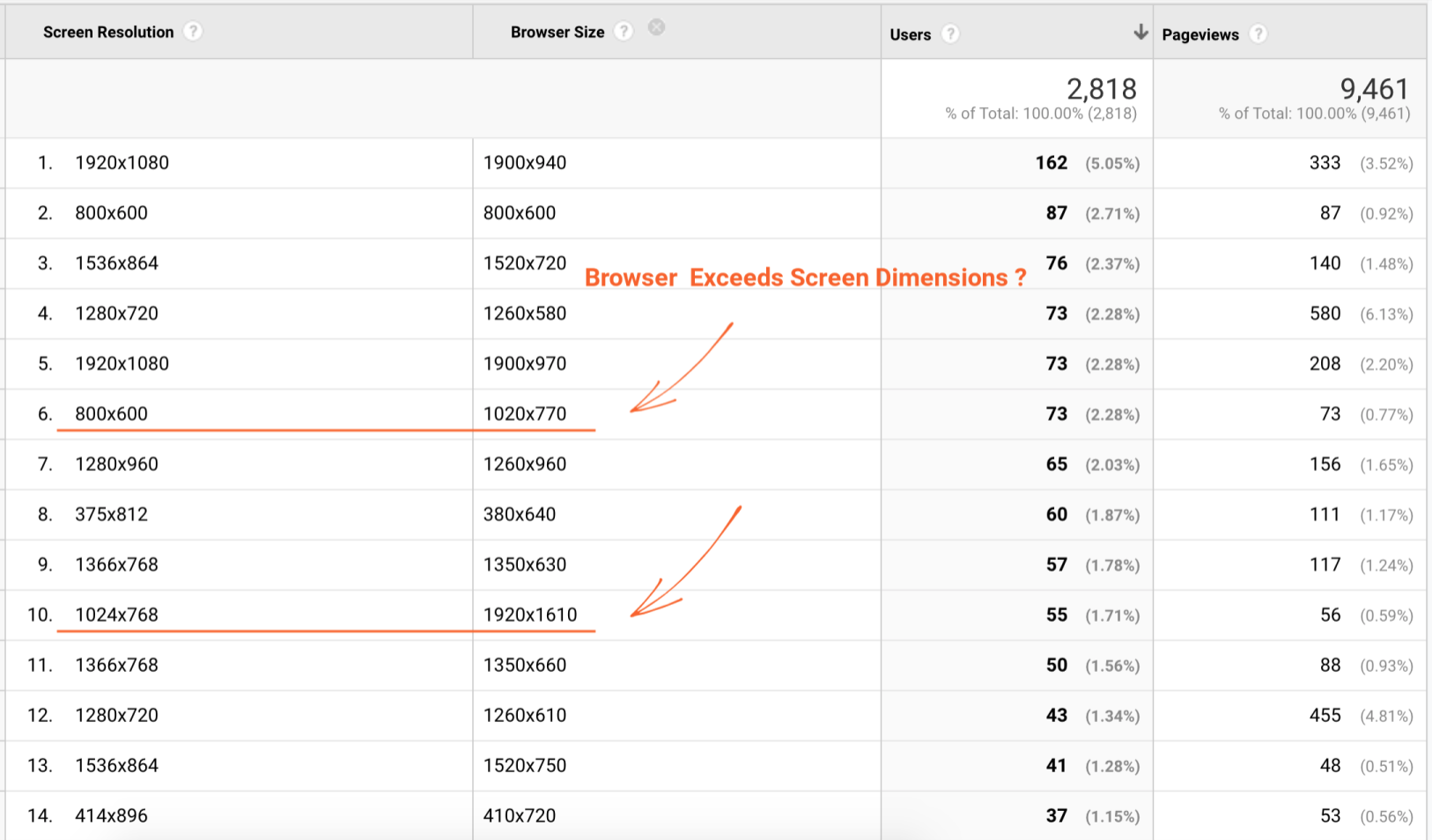

First, we investigate examples of this anomaly manually using the built-in Google Analytics dimensions Screen Resolution and Browser Size:

Focusing on line item #6 form the above report, we can see 73 visitors with a screen resolution set to 800 x 600 and browser dimensions of 1020 x 770, exceeding the screen measurements by more than 150 pixels in both directions.

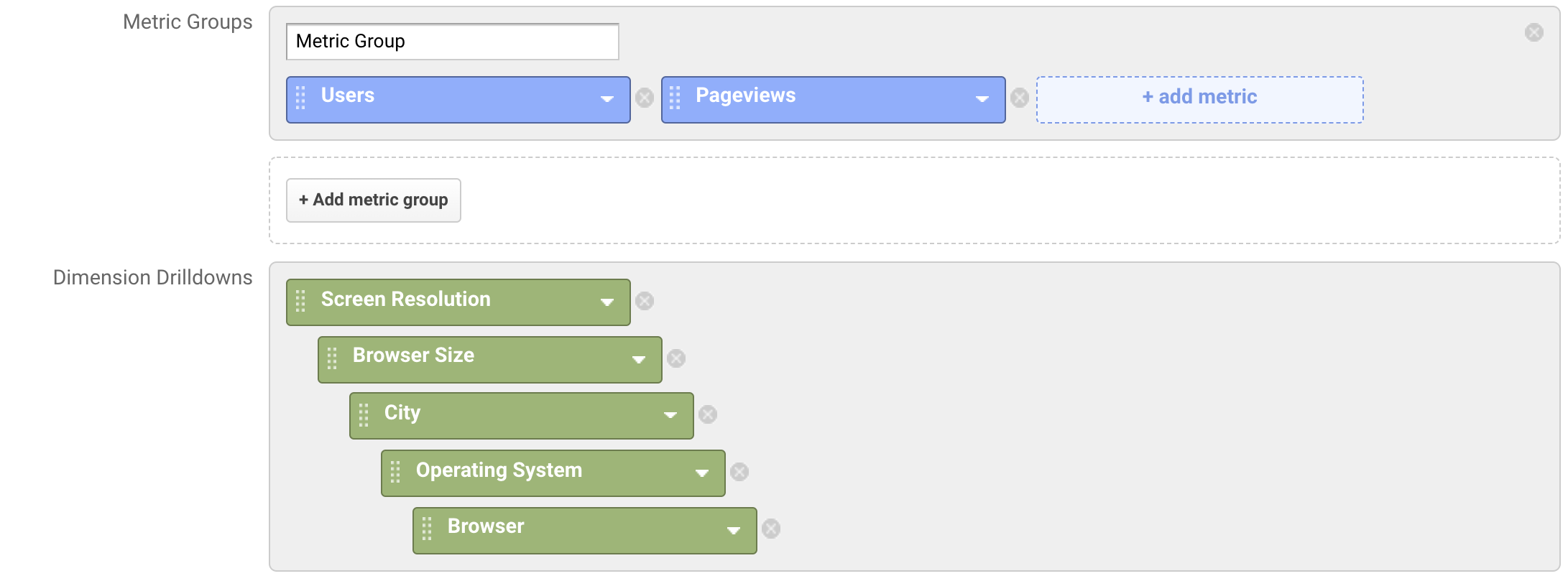

By itself, this is already highly irregular. But we can drill in to discover further oddities. Let's build a custom report with the following settings:

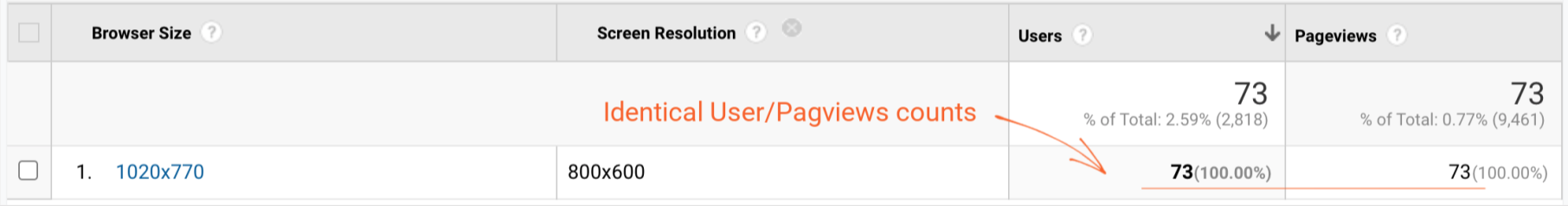

1. The first thing that pops out is that the number of Pageviews is equal to the number of Users, which is another signal (in general with bot activity we see one of two extremes - either a very high bounce rate (in this case 100%) or the exact opposite - almost no bounces):

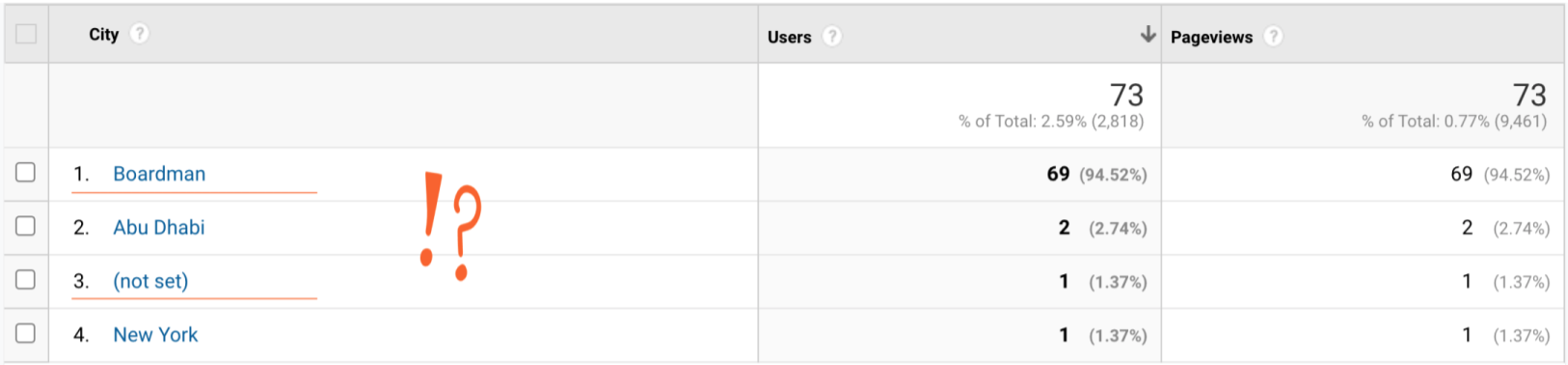

2. Drilling into the City dimension, we can see that the majority of the requests come from highly suspicious locations such as Boardman (a well-known Amazon Web Services data center) and, of course, the mysterious (not set):

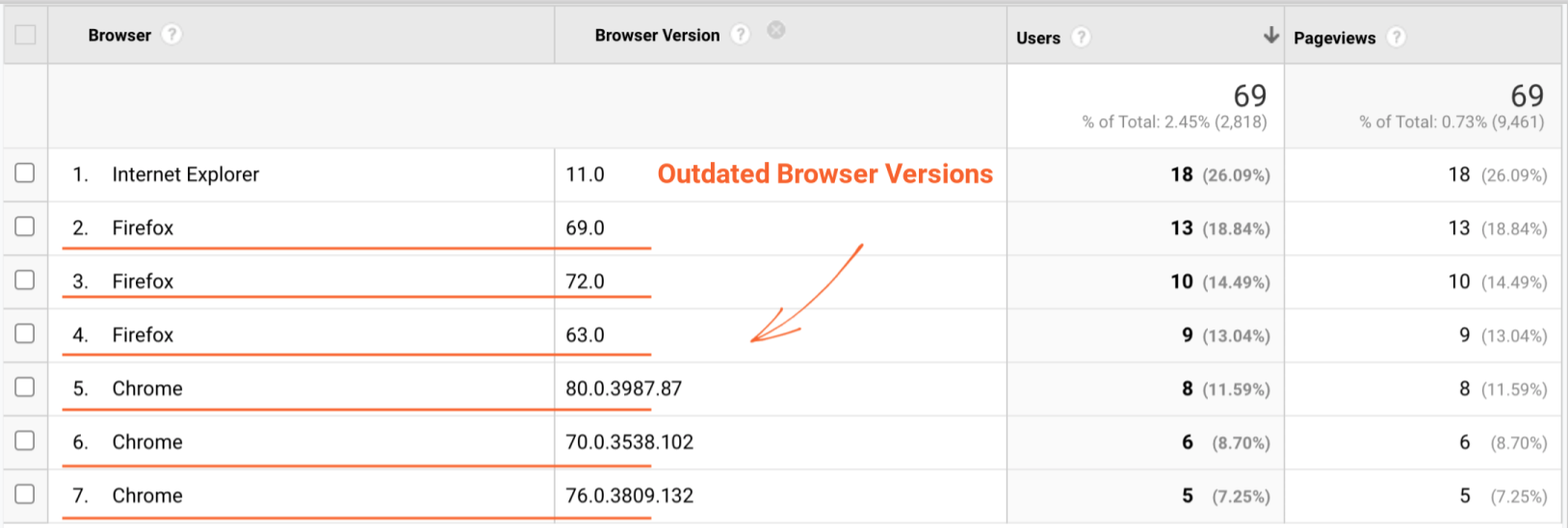

3. Examining Boardman in more detail, we can see further clues that this traffic lacks in flesh and blood. All visitors are supposedly on a Windows desktop, with the majority of the traffic coming from outdated Chrome and Firefox versions. The assumption is that a real visitor's browser version is usually much more recent for Chrome and Firefox due to auto-updates, whereas bots and spiders are reluctant, and often unable, to hop on the latest as quickly.

When combined, the clues are a pretty strong indicator that nearly all of the traffic sporting a bigger browser window than its screen size is indeed robotic by nature. So can we turn this manual analysis into a process for flagging such spiders?

GTM Configuration

While building a GA custom report is fairly straightforward, the first step (comparing browser size and screen resolution across thousands of screen resolution/browser size combinations) can be time consuming. With an assist from GTM, however, this process can be automated:

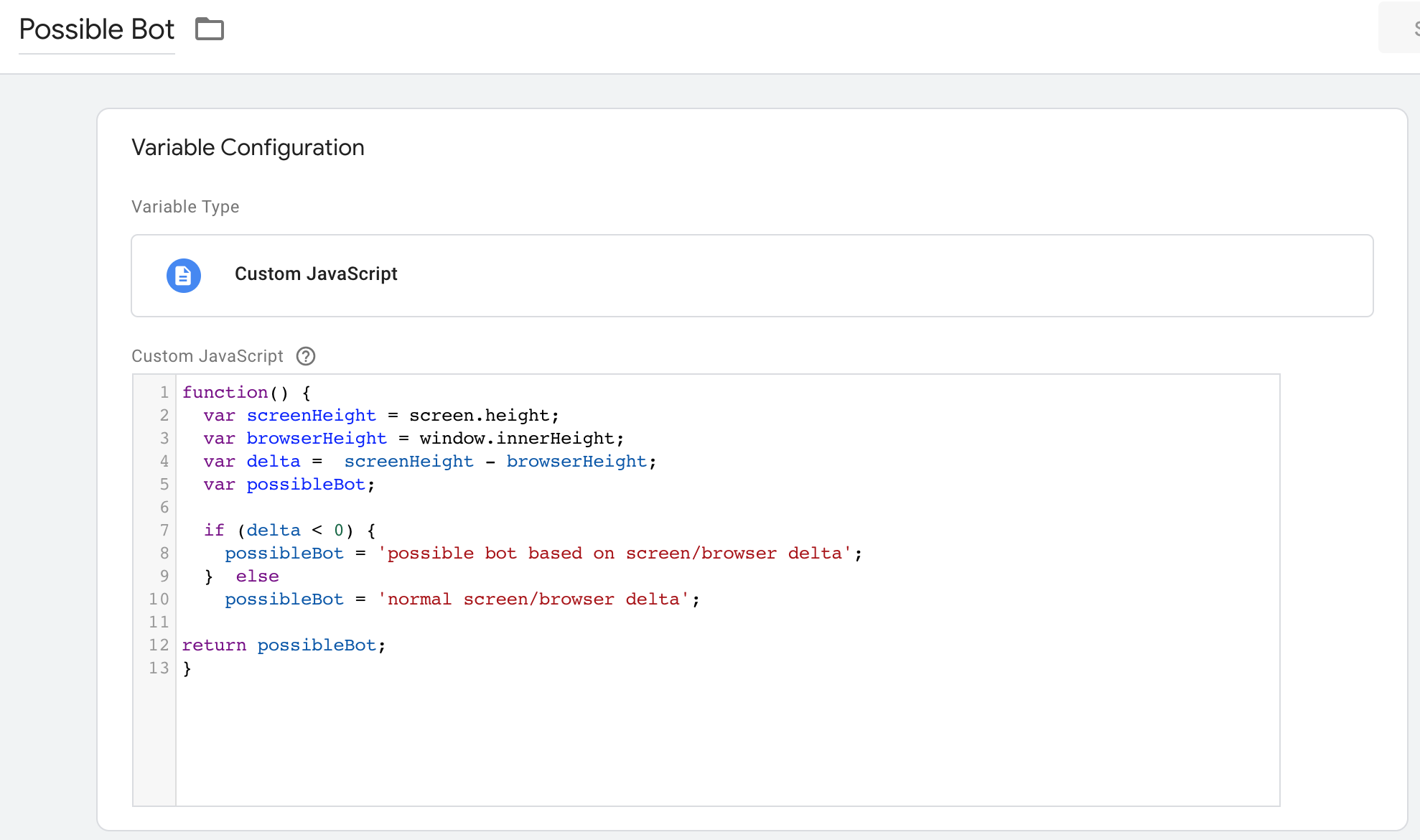

1. Create a Custom JavaScript variable that will capture and evaluate the screen and browser height:

Sample Code:

function() {

var screenHeight = screen.height;

var browserHeight = window.innerHeight;

var delta = screenHeight - browserHeight;

var possibleBot;

if (delta < 0) {

possibleBot = 'possible bot based on screen/browser delta';

} else

possibleBot = 'normal screen/browser delta';

return possibleBot;

}

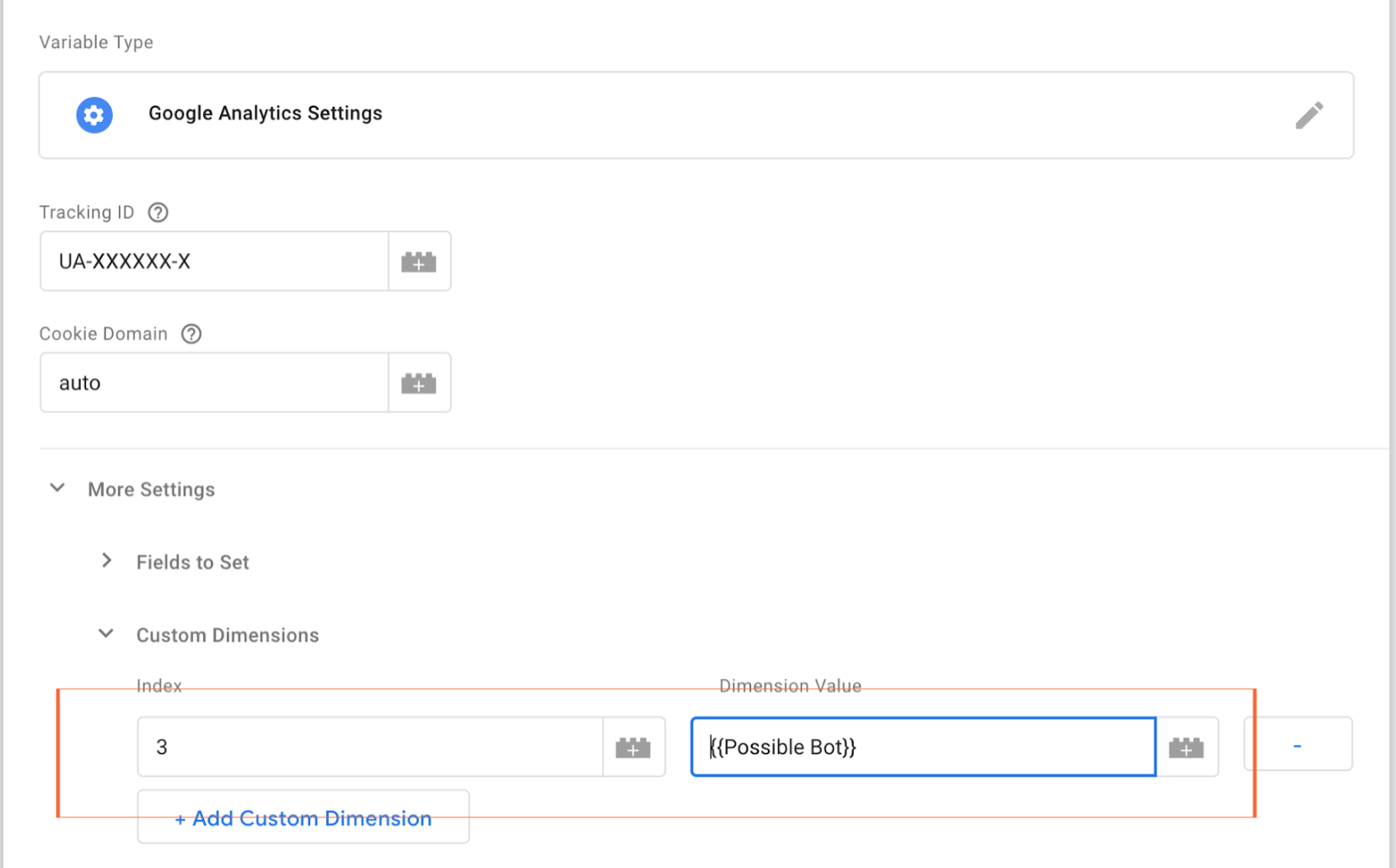

2. Configure a "Google Analytics Settings" variable to pass the value of {{Possible Bot}} to Google Analytics in the dimension index configured in the step below. In this example, we use dimension slot #3:

GA Configuration

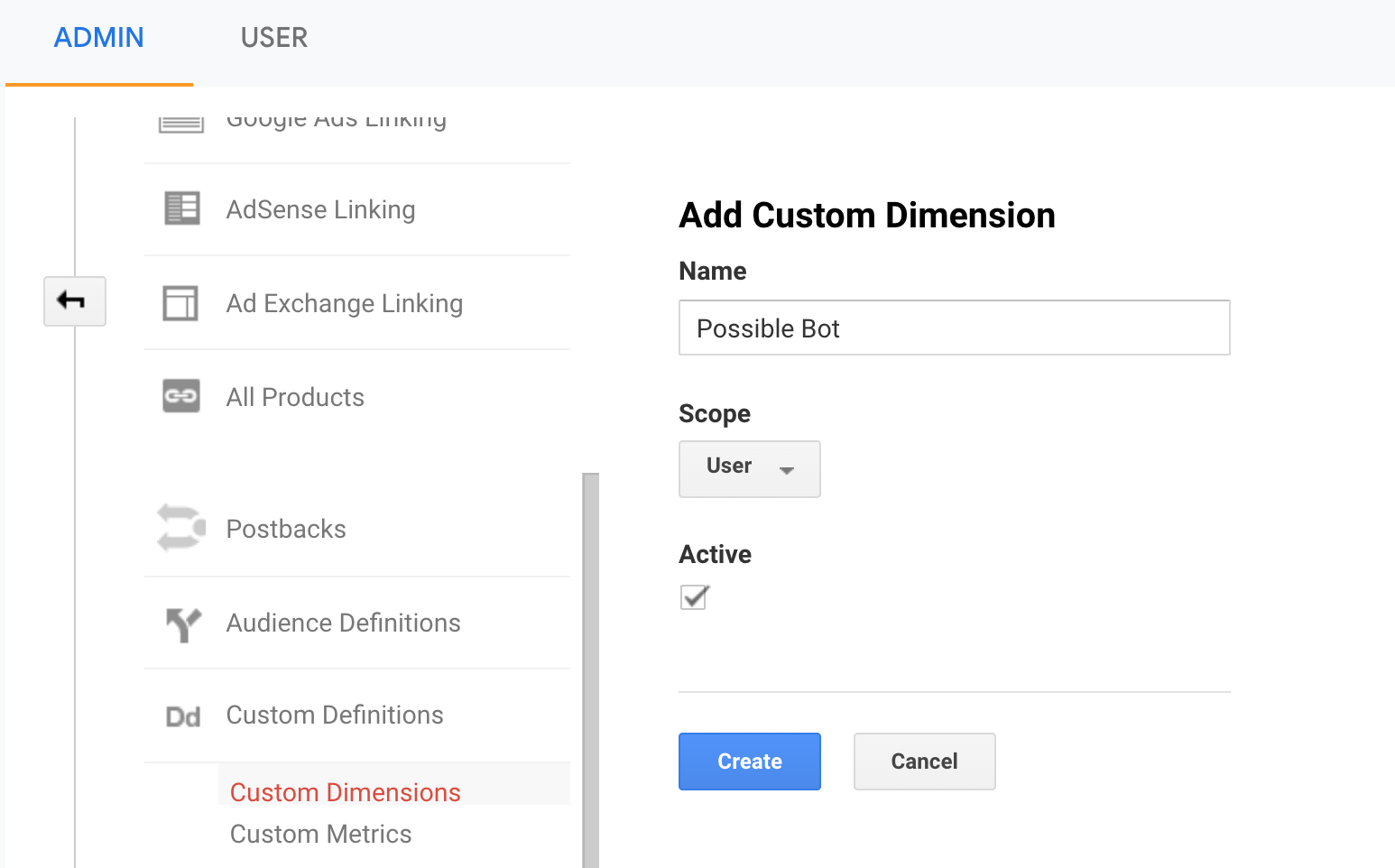

In Google Analytics, under Admin/Custom Definitions, configure a custom dimension for an available slot (in this example index #3) as shown:

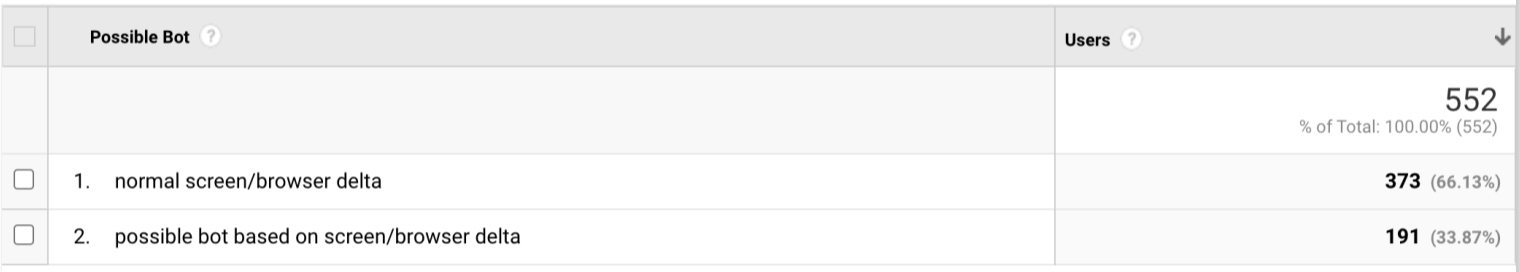

Once data is flowing, you can pair the new custom dimension with other dimensions, use it in segments, or create custom reports to help you flag suspicious traffic to your website more quickly:

Conclusion

Automatically comparing the delta between client-reported browser viewport dimensions and screen size can be a significant time saver and a valuable addition to your toolbox for identifying modern spider activity.

|

|

|

|

QA2L is a data governance platform specializing in the automated validation of tracking tags/pixels. We focus on making it easy to automate even the most complicated user journeys / flows and to QA all your KPIs in a robust set of tests that is a breeze to maintain.

|

|

Tags: Data Quality Google Analytics Tips