Data Over-Collection and Analytics Maintenance (with Jason Thompson)

Introduction

by Nikolay Gradinarov

It's not every day that you hear you need to collect less data. But that's exactly what Jason Thompson will tell you.

Jason and his team at 33 Sticks are on the front lines of data collection and analytics implementations. In an industry often inundated with buzzwords and clichés, Jason stands out, opting for a practical and measured approach. There is permanent value in a transparent delivery that puts the client’s interest first, even when that interest deviates from the latest fads in analytics.

I had a chance to chat with Jason a few days ago. We discussed the true cost of data collection and the importance of implementing sustainable analytics solutions. Our conversation started around this tweet:

If you hire me to help your company become smarter with data, the first thing I will do is throw away half of the data you are currently collecting and you will instantly become happier, healthier, and more informed.#SustainableAnalytics

— jason (@usujason) January 6, 2021

But as it often happens, the dialog quickly took off on multiple tangents. We hope you enjoy some of the highlights below!

Theory and Practices in Data Collection

In the early days of digital analytics the MarTech stacks were much simpler—an organization might have used a single analytics vendor, an email tool, a survey platform, and perhaps a conversion tag such as DoubleClick. Within an analytics implementation, there may have been only a handful of available custom variables, which forced companies to really think strategically about what data should be captured in these variables.

Fast forward to today, we live in a world where organizations habitually over-collect data. It's not uncommon for an organization to use dozens of MarTech vendors. Within any given analytics or marketing tool, there are often hundreds of custom variables (Adobe Analytics e. g. offers 1,000 conversion events and 250 eVars). This drives the notion that data collection is cheap and results in endless deferral of any decisions on what data points get captured and what gets special treatment ("We'll start by tracking everything!").

To be sure, the maxim that more data is better than less data does come in handy when addressing complex business questions from multiple angles. But there's no such thing as free lunch, and there's always a cost to every data point collected and analyzed. In some cases, tools are marketed as free (such as the standard Google Analytics offering) so an organization might not have any reservations about adding yet another MarTech vendor.

On paper, a company may draw up elaborate plans for the future use of that data—exporting it out of the clickstream analytics platform, making it available in cloud storage/compute instances such as AWS or GCP, running advanced statistical analyses on top of the exported data, you name it. In practice, the company may still struggle with the basics of producing accurate and trustworthy counts of even boilerplate metrics such as visits and visitors.

While enabling data collection has its own set of challenges, the root of the problem is that there is little thought given to data maintenance. That’s where the notion of capturing everything pro-actively begins to unravel. The reality is that even selective data collection usually gets progressively messy with time. This lowers the utility of the reporting and may lead to a complete loss of trust in the data. Without proper maintenance, data quickly becomes technological debt. In the end, many companies feel so burdened with data debt that they give up on gleaning any kind of decision-making power from frameworks that still cost them hundreds of thousands of dollars per year.

Factors Contributing to Data Over-Collection

Turnover

Most of us have a strong urge to “create in our own image.” A data practitioner may inherit a superb and stable implementation and still decide to leave their mark by re-architecting it in a significant (and costly) way. Practitioners on average stay 12 to 18 months at a given company. Soon, someone new comes along and tears downs what their predecessor did, only to rebuild it in a way they think is best.

The end result—instead of evolving and judiciously gardening an implementation to increase its yield, the organization may be stuck in a perpetual cycle of "we're gearing up to get some really great results out of our brand new X platform by the end of this year."

Lack of Executive Leadership

From the very early days of digital analytics implementations, the question of who owns digital analytics has been racking up a body count. There is usually no VP-level sponsor in charge of digital analytics. It's usually owned by a technical implementer. In many contexts, these individuals don’t have the experience and the strategical thinking to ask the difficult business questions. Even if they do, they may feel like they lack the authority to speak up and make waves. At the same time, they love to build new things. So an organization ends up with a lot of cool building blocks, but no clear strategical direction in how they fit together, much less in how data collection can support that structure.

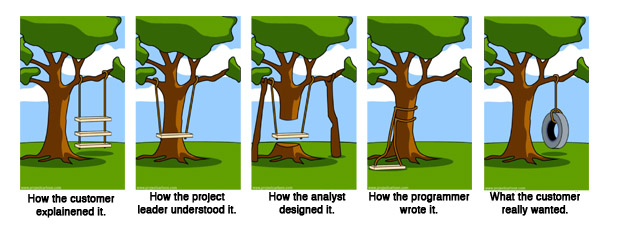

Building in Isolation

In many instances, analytics implementers don't have in-depth experience in analyzing data. They are usually quite familiar with building reports and understand what those reports show, but they are not truly committed to "doing things" with the data and often lack a comprehensive understanding of the business itself. If data collection is done in isolation from the business vision, then it is not really aligned with how the business operates.

Conversely, an organization might have power analysts who command internal attention with the analyses they produce. But those same analysts may lack understanding of how data is collected and sourced. This often leads to brilliantly insightful but critically flawed data story-telling.

Fully understanding the data absolutely must include understanding how data is collected. Add to that detailed understanding of any attribution, statistical / predictive, and propensity models that are applied to the collected data. To get to that level, it is critical to have a close partnership between analysts and implementers.

The Agency Problem

In no small part, data over-collection is also the byproduct of analytics implementations that have been oversold upfront.

This is indicative of a conflict-of-interest situation in which an agency has every interest to design complex implementations involving more data points, which generally leads to more billable hours and higher fees. Whether the client needs all that data and reporting to address their crucial business questions is not asked at that point. It is not unheard of for an agency to stand up a really elaborate implementation, and only a year down the road the client would throw up their hands and say, “We have all of this data, but we don’t know what to do with it!”

A variation on this theme is unnecessary reimplementation—happening on both the Google and Adobe Analytics side of the isle. Nine times out of ten, migrations happen because some agencies have a lucrative arc hinging on convincing their clients that one vendor is much better than another. The reality is that big agencies receive large revenue streams in the form of kickbacks from any vendor they can sell.

Automation Magic, "MarTech Theater", and Getting #mattgershoffed

One of the driving forces behind over-collecting data is the false notion that a custom data lake can be somewhat magically transformed into a golden goose through machine learning, AI, or whichever glitzy and trendy form of automation comes next. This notion is strongly reinforced in the sales methods of most MarTech vendors and forms an integral part of the infatuation organizations display repeatedly when buying new tools.

In describing these trends, Jason borrows an apt term coined by Matt Gershoff: "MarTech Theater." Buzzwords can often dominate the agenda, setting up lofty, but often badly misunderstood, expectations that ultimately fail to deliver any practical utility. Then it's on to the next blind date with another vendor.

The Cost of Data—Brainstorming a Formula

Is there a way to estimate the true cost of data and if not, what are the factors that we should consider when trying to put together an estimate?

The way Jason breaks it down is reminiscent of trying to assess a computational complexity problem with Big-O notation:

1. Cost of purchasing a particular solution is more or less a constant: O (1).

2. Cost of implementation could be linear and based on the number of data points/variables: O (n).

3. Cost of maintenance can be also expressed in a linear fashion and is dependent on the number of variables that are being maintained: O (n).

The unfortunate reality is that when organizations budget for purchasing and implementing an analytics solution, the maintenance part is often conveniently forgotten. Maintenance includes all sorts of activities, from ongoing tag monitoring, to regression testing for compliance with privacy regulations, report re-configuration, documentation, education, etc. If these activities are left unspoken, this opens the door to a set of much more unpredictable cost factors:

1. What's the cost of losing trust in the data?

2. What's the cost of bad decisions based on inaccurate data?

3. What's the opportunity cost of decisions not made because of untrustworthy data?

It is clear that there is no easy formula—and there is no use pretending that there are simple universal measures one can take to avoid data over-collection. It's even worse to pretend to know what should be collected vs. not—significant business subject matter expertise and deep knowledge of the tools is required in order to arrive at these decisions.

We hope that our post gave you some sense of the importance of making judicial choices in data collection as early in the process of standing up an implementation as possible, and the importance of factoring for maintaining your analytics implementations.

|

|

About QA2L |

|

QA2L is a data governance platform specializing in the automated validation of tracking tags/pixels. We focus on making it easy to automate even the most complicated user journeys / flows and to QA all your KPIs in a robust set of tests that is a breeze to maintain.

|

|

Tags: Data Quality Trends Analytics Tips